SEO Content Pipeline

LiveMulti-agent AI system that automates content production from keyword research to WordPress publishing

The Challenge

Content production for a bilingual travel site — TravelEspain.com (EN) and LaplandTripPlanner.com (EN/FI) — was a bottleneck. Keyword research, content briefs, writing, quality assurance, and WordPress publishing took several hours of manual work per article. Keyword research revealed hundreds of search terms with no content on the site, and dozens of existing pages whose internal linking, metadata, and content structure needed optimization.

Why the Manual Process Wasn't Enough

The manual process didn't scale. The site competes with major travel brands, so content needs to be produced systematically and at high quality — not randomly whenever there happens to be time. Additionally, SEO decisions (what to write, which keywords, in what order) were based on gut feeling rather than data.

Process Mapping

I mapped the entire content production chain end-to-end: where the data comes from (Google Search Console, DataForSEO keyword research, site crawl), what decisions are made at each stage, and which steps are mechanical vs. creative work. I identified that most steps can be automated, but a human still needs to make the final publishing decision.

Data-Driven Content Strategy

I moved from reactive content production to data-driven. Keywords are grouped into clusters (e.g., "Barcelona", "Canary Islands"), each with a pillar page and supporting pages. The system automatically scores opportunities based on search volume, competition, and search intent, so the writing order is based on data rather than guesswork.

Three-Agent Pipeline

Off-the-shelf SEO tools (Surfer, Frase) do individual steps well but don't cover the entire chain. Additionally, their AI writing quality is weak — the generated text almost always requires a rewrite. I built a custom pipeline where three AI agents (SEO architect, writer, editor) work together through a quality assurance loop. The editor scores each article and sends it back to the writer until quality exceeds the threshold.

Different content types (guide, comparison, buying page) have their own specialized prompts that guide the writer agent. A travel guide is written with a different structure and tone than a hotel comparison or "10 best restaurants" list. This is a major advantage over generic AI writing tools, where all content comes from the same mold.

Tools and Modules

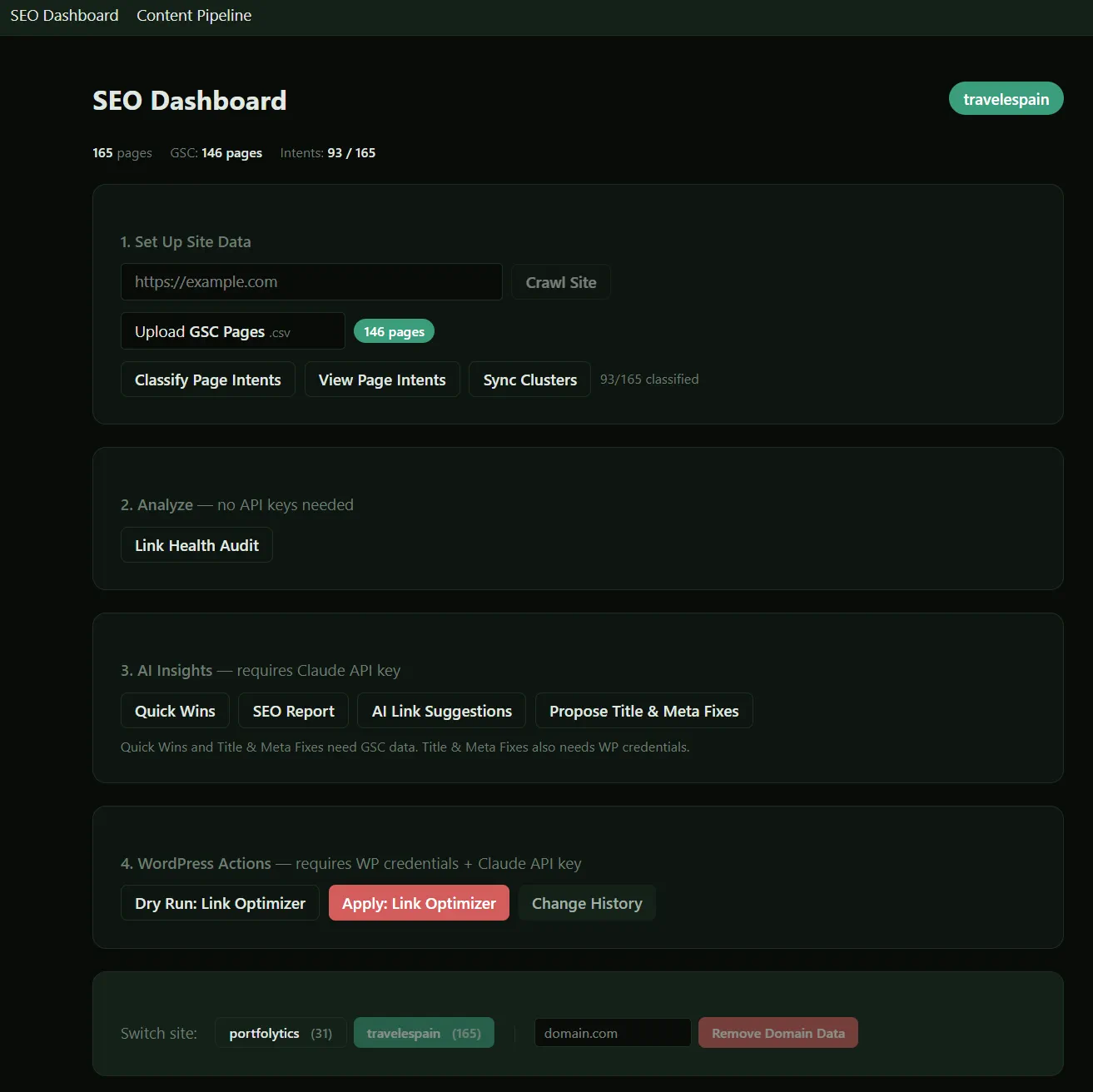

Modular tools covering the chain from keyword research to WordPress draft:

- • Data collection: GSC data, site crawl, and keyword research (DataForSEO API) update the database

- • Analytics: Quick wins, internal linking opportunities, CTR optimizations, and cluster analysis

- • Content production: From keyword through brief to finished WordPress draft

- • Dashboard: Next.js control panel showing clusters, opportunities, briefs, and drafts

The tools are independent and modular — they can be used individually or chained as needed. A human still does the final review and publishing decision.

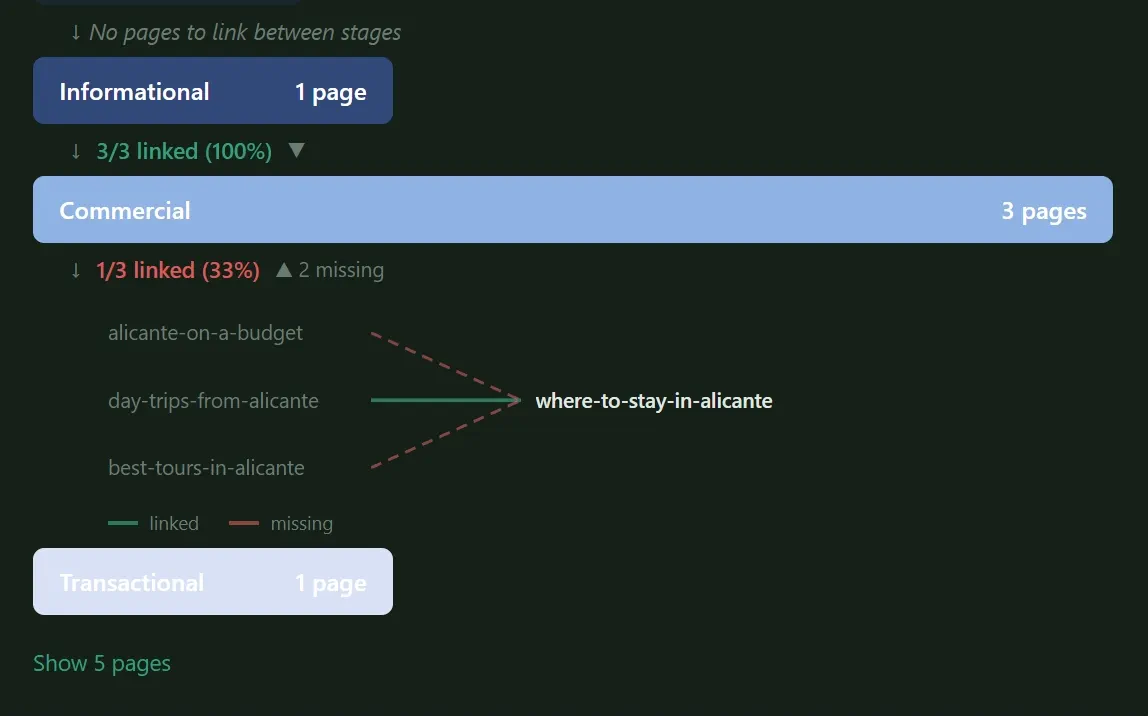

Below is a view of internal linking within a single cluster, showing how pages with different search intents are linked together. The goal is to guide visitors through a funnel toward commercial pages, both by directing internal traffic to them and by sharing the SEO value of higher-traffic pages. This happens through internal linking, which without a good tool is extremely challenging.

The Human Role

The system doesn't try to automate everything. A few steps are intentionally manual, because human judgment is better or automation doesn't produce sufficient quality:

- • Cluster structure: A human defines clusters and their page structures. When the structure is explicit, missing content shows up as gaps immediately rather than hiding behind algorithms.

- • Search intent: Classifying pages into search intent stages is mostly manual but fast. The system suggests classifications; a human confirms or corrects.

- • Data import: GSC and GA4 data are imported as CSV files. MCP integrations are on the development roadmap.

- • Text polishing: Especially smoothing out the language and adding personal experiences and opinions.

- • Adding images: For a travel site, using original photos is important.

Impact

Individual article production time dropped from several hours to minutes. Content quality improved because every article goes through a systematic QA cycle rather than depending on a single writing session. Internal links and metadata stay in order automatically. The site's content strategy is now data-driven: the system tells you what to write next and why. The pipeline is live and actively powering content on TravelEspain.com and LaplandTripPlanner.com.

Technical Stack

Next.js (app router) + SQLite + Claude API + DataForSEO. Three-agent pipeline (architect → writer → editor) with a quality assurance loop. Content-type-specific prompts guide the writer. ~15 modular tools in the Agent SDK interface. Dashboard as a control panel.

Key Learning

The biggest value of automation isn't the speed of a single article — it's that the content strategy is based on data rather than gut feeling. When the system tells you what's worth writing and why, resources are allocated correctly.